Knowledge Base

Possible interview questions.

Interview questions for probability and statistics.

Can you give an intuitive definition of probability of an event?

Intuitively, probability is the measure of how likely an event is to happen, a value between 0 (impossible) and 1 (certain), often expressed as a fraction or percentage, representing the proportion of times an outcome occurs over many repetitions of an experiment, like a coin landing on heads roughly half the time in many flips.

What is the absolute necessery condition for the intuitive definition of probability to be true?

The intuitive definition of probability (classical/frequentist) says it's the ratio of favorable outcomes to total possible outcomes (P(E) = Favorable/Total). The crucial condition for this simple formula to work is that all possible outcomes must be equally likely (equally probable).

What is the difference between probability and statistics?

Probability tells you how to go from a population to a sample, and statistics tells you how to go from a sample to a population. So if one has a bin with red and blue balls and takes a number of balls from it, statistics estimates how many blue and red balls there could be in the bin. The probability estimates how likely it is that you take specific number of blue and red balls knowing the number of it in the bin.

Enlist and explain the most important combinatoric formulas.

- Number of possible permutations to arrange distinct objects:

Consider 5 balls of different colors, how many ways are there to put it on a table near each other on a line?

- Permutations with replacement:

How to order r distinct, independent objects with n options for each object (how many outcomes are there throwing r = 6 dies with n = 6 faces).

- Permutations without replacement:

How to order r samples out of n distinct objects (how many ways are there to take r = 5 cards out of a deck with n = 52 distinct cards).

- Combinations with replacement:

Choose r distinct objects with n options, where order of the objects does not matter, but options of the objects can repeat (choosing 2 ice-cream scoops out of 3 flavors, but scoops can be of the same flavor ).

- Combinations without replacement:

Choose r samples out of n available (like choose r = 3 persons from a group of n = 5 people, a person can not be chosen twice).

How is probability generally defined in terms of sets?

Let be a sample space, - an event or a subset from the sample space, then probability is a function defined between 0 and 1 describing how likely it is for the event to come. Probability function must sutisfy following rules:

- , and

- For disjoint events and , the probability of an overlapping event is a sum of the probabilities of the separate events:

How to express a possible outcome or element of a sample space in terms of sets?

Let be a possible outcome or an element of a sample space :

What are the differences between "element of", "subset" and "proper subset"?

- - "subset" means every element of a set is also in another set and these sets may be equal, e.g.:

- - "proper subset" means every element of a set is also in another set, but they are not equal, e.g.:

- - "element of" means an object is an element of a set, e.g.:

A set can also be an element of another set, but only as a single element and not as a combination or permutation of another single elements, e.g:

What is the difference between union and intersection of two sets?

- - union, means that elements are in A, or in B, or in both sets, e.g.:

- - intersection, means that elements are in A and B, e.g.:

What is the probability of the union of two non-disjoint event sets?

Addition rule of probability, or inclusion exclusion formula:

How to calculate a probability that an event won't happen, knowing its probability to happen?

Because the probability of a sample space is 1 and if we know the probability of an event to happen, we can calculate the needed probability as follows:

What is conditional probability of two events and how it is calculated? Give a simple example.

The conditional probability of an event A given event B is written as:

and is defined by:

where .

Imagine a sample space S as a big rectangle (all possible outcomes).

- Let's draw set B as a circle inside S, meaning all outcomes where event B happens.

- Let's draw set A as another circle, meaning all outcomes where A happens.

- The intersection of the circles represents outcomes where both A and B happen.

Now, if one knows that B has happened, the subsets are restricted to B. One ignores everything outside B and wants to know how much of B is also in A, which is the overlap of two events and rerpesents conditional probability.

Let's consider 100 students:

- 40 are in club A

- 50 are in club B

- 20 are in both A and B

Then, the probability that a student from club A is also in club B:

What is Bayes' rule?

Bayes' rule describes connection between conditional probabilities of two events:

Which follows from the definition of the probability of the intersection of two events:

Which in turn follows from the definition of conditional probability.

Define the law of total probability.

The law of total probability allows to compute the probability of an event A by breaking the sample space into disjoint cases (events that do not overlap) that together cover all possibilities. If is a partition of the sample space S (disjoint events that sum to the whole space, ), then:

What is the difference between prior and posterior probabilities?

The prior probability of an event is the initial belief about the event before observing any new evidence. It’s what is known or assumed before seeing data. The prior probability is defined by . The posterior probability is the updated belief about an event after observing evidence. It is defined by conditional probability .

When are two events independent?

Two events are independent when the probability of their intersection is equal to the multiplication of their prior probabilities:

Which is equivalent to the following:

How is independence of many events defined?

Infintely many events are said to be independent if any finite subset of them is independent. It means that the probabilities of the intersections of the finite subsets is defined by multiplication of their prior probabilities (pairs, triplets, quadruplets and so on).

What is conditional independence?

Events A and B are conditionally independent given E if the following is true:

Give a definition of random variables.

A random variable is a function that assigns a numerical value to any possible outcome from a sample space of an experiment, e.g. an experiment with two coin tosses:

Sample space is , now define , , and , where variable represents number of heads in a series of two coin tosses.

What is a distribution of a random variable?

Distribution of a random variable specifies the probability of all events associated with it.

Name two main types of random variables.

There are two main types of random variables discrete and continuous.

Give a formal definition of a discrete random variable.

A random variable X is discrete if there exists a finite or countable set such that for each and .

What is support of a discrete random variable?

It is a finite or countable set of values, where the probability of a variable taking these values is bigger then zero.

What is probability mass function of a discrete random variable?

The probability mass function of a discrete random variable X is a function that gives probability of the variable to take value from its support.

Give a formal definition of the Bernoulli distribution.

A random variable is said to follow a Bernoulli distribution with parameter , where , if probability mass function (PMF) is , for . Here represents success with probability and represents failure with probability . A Bernoulli distribution models a single binary experiment with success probability .

What is an indicator random variable?

An indicator random variable is a function that takes value 1 when a given event occurs and 0 otherwise. It is a Bernoulli-distributed random variable with parameter equal to the probability of the event.

What is Bernoulli trial?

A Bernoulli trial is a single random experiment with exactly two possible outcomes, usually called success and failure, where the probability of success is fixed.

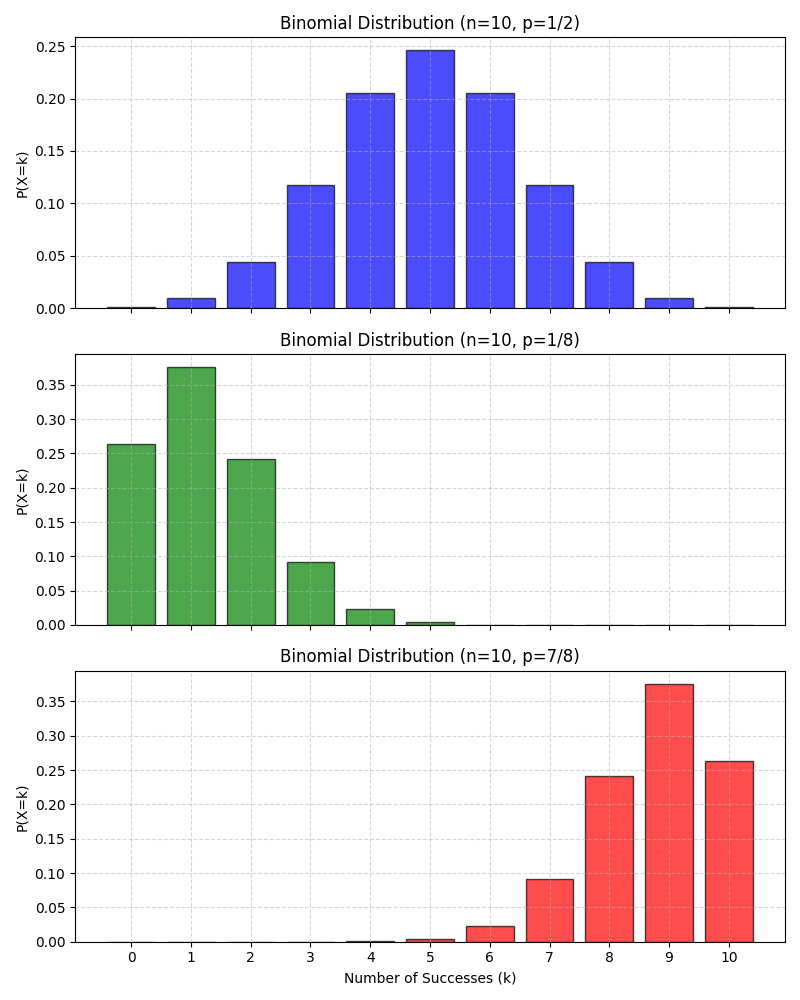

Give a formal definition of Binomial distribution.

A random variable is said to follow a Binomial distribution with parameters and if it represents the number of successes in independent Bernoulli trials, each with success probability . It is denoted by . A Binomial distribution models the number of successes in a fixed number of independent Bernoulli trials with the same success probability. The PMF of the Binomial distribution is defined as:

for . The first part of the PMF represents the number of combinations for successes out of trials and we are not interested in the order of the sucesses.

Here is the visualization of some Binomial distributions:

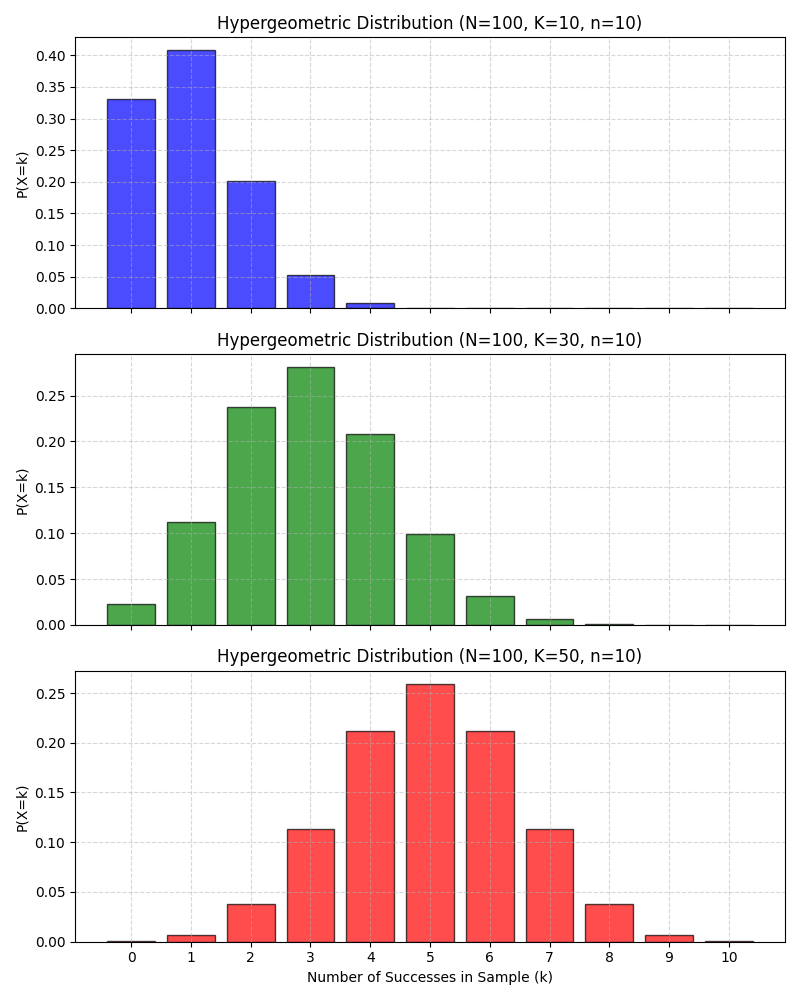

Give a formal definition of Hypergeometric distribution.

A discrete random variable has a Hypergeometric distribution if it counts the number of successes in a sample of size drawn without replacement from a finite population of size containing exactly successes. It is denoted by , where is the total population size, is the total number of sucesses in the population, - number of draws and - number of sucesses in the sample.

The PMF of the Hypergeometric distribution for is defined by:

where:

- - number of ways to choose sucesses out of available successes.

- - number of ways to choose remaining failures out of available.

- - number of total ways to choose elements out of available.

Here are plots of some Hypergeometric distributions:

Is there connection between Binomial and Hypergeometric distributions?

The binomial can actually be seen as a limit or approximation of the hypergeometric under certain conditions.

| Binomial | Hypergeometric |

| Independent trials | Dependent draws |

| Sampling with replacement | Sampling without replacement |

| Infinite or very large population | Finite population |

| Constant success probability | Changing success probability |

When the population is very large compared to the sample size, sampling without replacement behaves almost like sampling with replacement.

Give a formal definition of a discrete uniform distribution.

A discrete random variable is said to have a discrete uniform distribution over a finite set of values if each value is equally likely.

for .

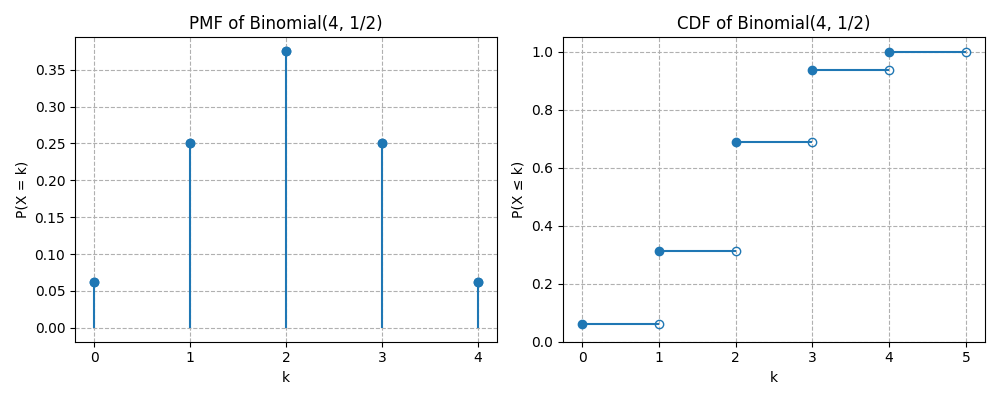

What is Cumulative Distribution Function (CDF)?

For a random variable the CDF is a function , for all .

Enlist and explain key properties of a CDF.

- Non-decreasing: If , then

- Right-continuous: at the points of jumps CDF is continuous from the right part.

- Convergent to 0 and 1 in the limits:

- Probability recovery:

How are PMF and CDF of a discrete random variable related?

The relation between PMF and CDF of a discrete random variable can be described as:

Here are the plots of PMF and CDF for :

Give a formal definition of a function of random variables.

A function of a random variable is a new random variable , defined by applying deterministic function to :

And formally for any outcome :

How to calculate PMF of a function of a random variable?

For a discrete random variable an , the PMF of is given by:

Here probabilities of all values of that map to the same are summed. For example lets consider a square function of a die roll:

- is die roll outcome with PMF , for

- values are 1,4,9,16,25,36

- The PMF of can be calculated as:

Define when two random variables are independent.

Two random variables and are independent if:

What is the distribution of the sum of two independent variables with binomial distributions?

If and and they are independent, then:

Give a definition of conditional independence of two random variables given a third random variable.

Random variables and are conditionally independent given a random variable for all and in support of if:

What is expectation of a discrete random variable?

Expectation or mean value of a discrete random variable is defined as a weighted average:

How do expectations of two random variables with the same distribution relate to each other?

Expectations of two random variables with the same distribution are equal.

How is the expectation of a sum of two random variables or a product of a constant and a random variable defined?

Due to linearity of expectations, these values for any random variables and and a constatnt are defined as:

and

How is monotonicity of expectation is defined?

For two random variables for which the enaquality is true with probability 1, the expectation relation is as follows: , where equality holds only if with probability 1.

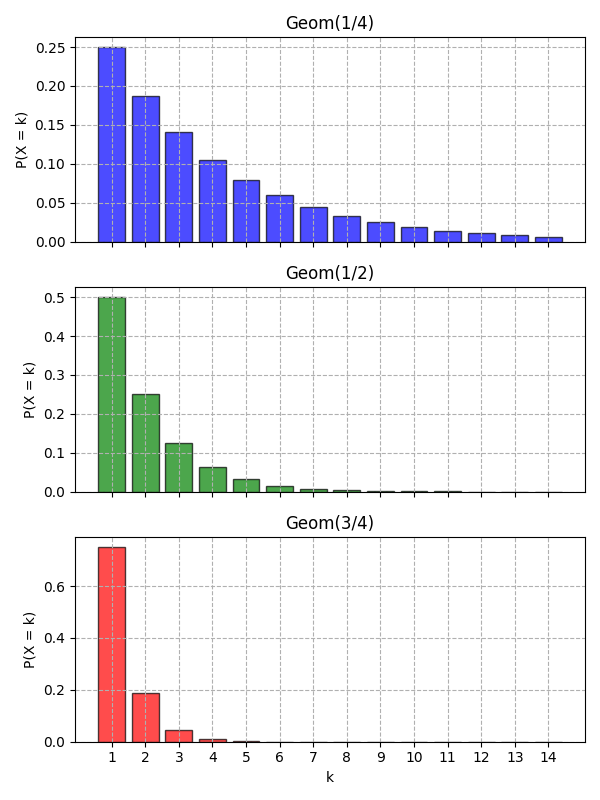

What is Geometric distribution of a discrete random variable?

Let be a sequence of independent and equaly distributed Bernoulli trials with

Define the random variable

Then is said to have a geometric distribution with parameter , denoted by:

The variable counts the number of trials until first success which occurs with a probability for each trial. The PMF of geometric distribution is defined for by:

The geometric distribution is memoryless:

The expectation for this distribution is:

Here are some plots of Geometric distributions for different probabilities:

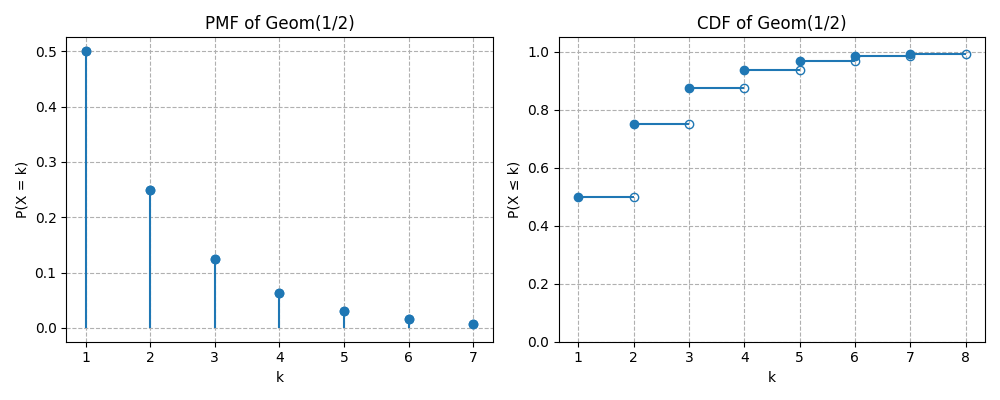

Give a formal definition of the Cumulative Distribution Function of Geometric distribution.

The CDF of a discrete random variable with distribution is defined for by:

Here is a plot of PMF versus CDF of Geometric distribution:

How is the Negative Binomial distribution defined?

If a random variable counts the number of trials to get -th success in a series of independent Bernoulli trials, then the variable has the Negative Binomial distribution, which is denoted by:

where is the probability of success.

How does PMF of the Negative Binomial distribution look like?

For the PMF of the Negative Binomial Distribution is defined by:

How is the Expectation of the Negative Binomial distribution defined?

The expectation of a random variable with the Negative Binomial distribution is defined by:

What are the main properties of indicator variables?

- for any positive integer

How is the Fundamental Bridge between probability and expection defined?

The probability of an event is the expected value of its indicator variable:

Give the formal definition of the Low of Unconcious Statistician (LOTUS).

If is a discrete random variable and is a function of real numbers, then:

Define the Variance of a discrete random variable.

The variance of a discrete random variable is given by:

Or an equivalent formula:

where

with be the PMF of .

What are the main properties of Variance?

Non-negativity:

.Translation invariance:

, for any constant .Scaling property:

, for any constant .Additivity for independent random variables and :

Give the formula of the variance of a discrete random variable with Binomial distribution.

If , then the variance of such variable is given by:

Give the formula of the variance of a discrete random variable with Hypergeometric distribution.

If , then the variance of such variable is given by:

Give the formula of the variance of a discrete random variable with Negative Binomial distribution.

If , then the variance of such variable is given by:

Give the formula of the variance of a discrete random variable with Geometric distribution.

If , then the variance of such variable is given by:

Give a formal definition and interpretation of Poisson distribution.

Let be a fixed real number. A discrete random variable is said to have a Poisson distribution with parameter , denoted:

if its PMF is defined as:

The Poisson distribution models the number of occurrences of events in:

- a fixed interval of time, area, volume, or space,

- when events occur independently,

- at a constant average rate ,

and cannot occur simultaneously.

Examples:

- Number of emails arriving in one hour.

- Number of radioactive decays per second.

- Number of customers entering a store in a day.

Here are the plots of PMF and CDF of :

How is the expectation of Poisson distribution is defined?

If , then its expectation is given by:

How is the variance of Poisson distribution is defined?

If , then its variance is given by:

How is the sum of two independent Poissons defined?

If and and independent of then:

How is the conditional distribution of a variable with Poisson distribution given a sum with another independent variable with Poisson defined?

If and and independent of , then the conditional distribution of given is .

How are Binomial and Poisson distributions related?

If with and such that remains finite, then can be approximated by .

Give a formal definition of a continuous random variable in terms of distribution.

A random variable has a continuous distribution if its CDF is differentiable. The function is allowed to be continuous but not differentiable at the end points as long as it differentiable at the rest of the points.

What is the main difference between discrete and continuous variables in terms of probability?

For a continuous random variable the probability in contrast to a discrete random variable. One can only define probability of a continuous random variable to fall into some range of values.

What is the Probability Density Function of a continuous random variable?

The probability density function (PDF) of a continuous random variable is defined by derivative of the CDF of this variable. The support of the variable is the set of all , where . The CDF itself is not a probability and it must be integrated for a range of values in order to calculate probability that a corresponding random variable falls into this range.

How to calculate CDF from PDF of a continuous random variable?

The CDF of a continuous random variable can be calculated from PDF as follows:

Which criteria must be sutisfied by a function to be a valid PDF?

In order to be a valid PDF a function must be:

- Non-negative:

- Integrate to 1:

How is the expectation of a continuous random variable defined?

The expectation, or mean value of a continuous random variable is defined as follows:

It can be thought of as a balancing point of a continuous distribution. The expectation in this case has also the property of linearity as for discrete variables.

Define the Low of the Unconcious Statistician (LOTUS) for continuous random variables.

If is a continuous random variable and is a function of real numbers, then:

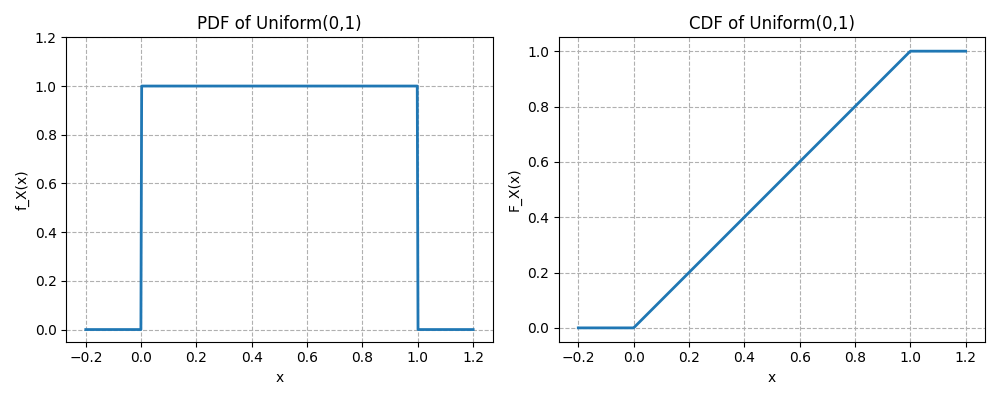

Give a formal definition of the Continuous Uniform distribution.

A continuous random variable has a Uniform distribution on the interval , where , if its PDF is

The CDF of the Uniform distribution is defined as:

Here are the plots of PDF and CDF of :

How is the Expectation of the continuous Uniform distribution defined?

The expectation of a random variable is defined as follows:

How is Variance of the continuous Uniform distribution defined?

The variance of a random variable is defined as follows:

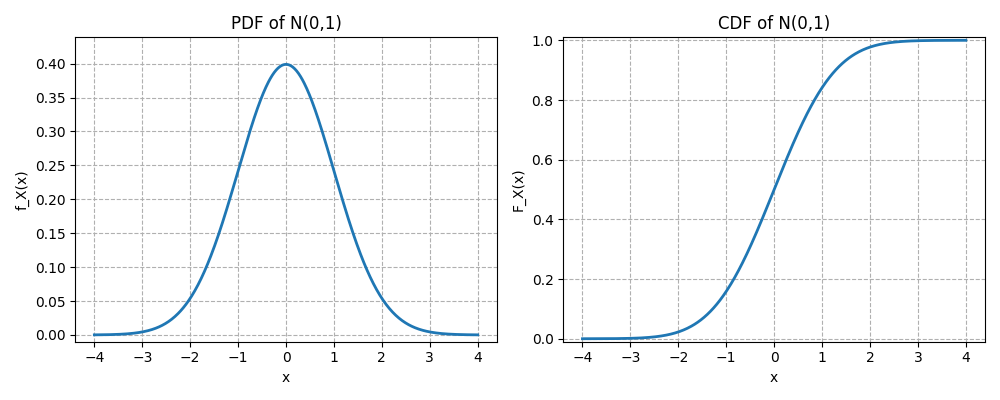

Give a formal definition of the Standard Normal Distribution.

A continuous random variable is said to have the standard normal distribution if its PDF is

How does the CDF of the Standard Normal distribution is defined?

The CDF of the standard normal distribution is defined as:

Here are the plots of PDF and CDF of the standard normal distribution:

Give the values of the expectation and variance of the Standard Normal Distribution.

The expectation of the standard normal distribution is and the variance is .

Give the formal definition of the Normal Distribution.

A continuous random variable is said to have a normal distribution with parameters mean and variance , denoted by , if its PDF is

How is the CDF of the Normal distribution is defined?

The CDF of the normal distribution is defined as:

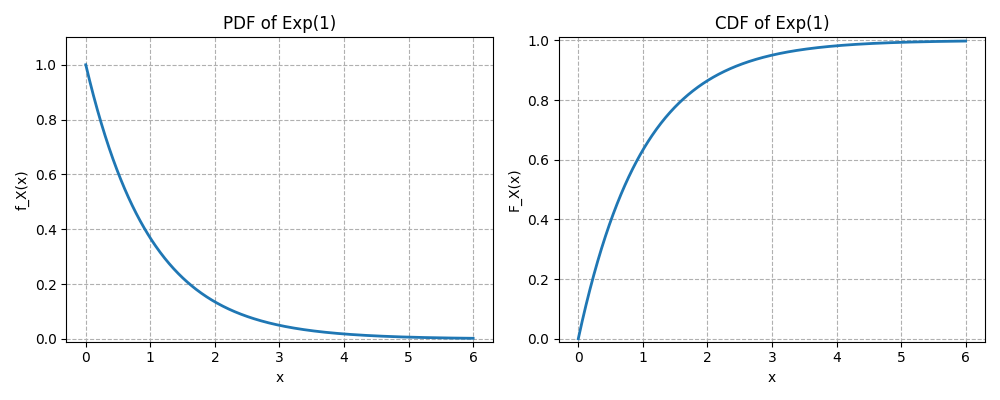

Give a formal definition of the Exponential distribution.

A continuous random variable is said to have an exponential distribution with a rate parameter , denoted by , if its PDF is

The exponential distribution models the waiting time until the first event in a process where events occur continuously and independently at a constant average rate.

How is the CDF of the Exponential distribution defined?

The CDF of the exponential distribution is defined as

Here are the plots of PDF and CDF of the exponential distribution:

How does Expectation of the Exponential distribution look like?

The expectation of the exponential distribution is defined as:

How does Variance of the Exponential distribution look like?

The variance of the exponential distribution is defined as:

What does memoryless property of a continuous distribution mean?

A continuous variable with support is said to be memoryless, if for all ,

The future does not depend on the past.

Which distribution does a memoryless continuous variable have?

If is a positive continuous random variable with memoryless property, then it has an exponential distribution.

Give a formal definition of a Poisson process.

A process of events occuring in continuous time is called a Poisson process with the rate , if two conditions hold:

- The number of occurances that take place in an interval of length is a random variable with distribution.

- The number of occurances in disjoint intervals are independent of each other.

How are interarrival times in a Poisson process defined?

An interarrival time in a Poisson process is the waiting time between the -st and -th event. E.g. is the time until the first event, - is the time between the first and the second event, etc. The interarrival times in a Poisson process have Exponentioal distribution and are independent of each other. The average waiting time is .

Give a formal definition of the Mean value of a distribution.

The mean value, or the center of mass of a distribution is defined by the expectation of the distribution (weighted average).

Give a formal definition of the Median value of a distribution.

Let be a real valued random variable. A real number is called median of distribution of if

and

Give a formal definition of the Mode value of a distribution.

For any real valued random variable , a real number is called mode value if it maximizes the PMF in a discrete case, and the PDF for continuous variable, for all .

What is the Skewness of a distribution.

The Skewness of a real-valued random variable with mean value and standard deviation is the third standardized moment of a distribution, which is defined as:

Which summary values are more appropriate for symmetric and non-symmetric distributions.

For non-symetric distributions mean and standard deviation values are missleading and median and interquartile range should (IQR) be used for skewed distributions. For a distribution with several peaks (multimodal distribution) mode value and intermodal spread (standard deviation within a peak) should be used.

Give a formal definition of interquartile range (IQR).

Let be a real-valued random variable with CDF . Define:

- the first quartile (lower quartile):

- the third quartile (upper quartile):

The interquartile range is defined as:

Give a formal definition of the Kurtosis of a distribution.

The Kurtosis of a real-valued random variable with mean value and standard deviation is the fourth standardized moment of a distribution, which is defined as:

Kurtosis measures the weight of the tails and the frequency of extreme deviations relative to a normal distribution. High Kurtosis (heavy tails) implies more frequent outliers and thus higher probability of extreme events. Lower Kurtosis means that the data are more evenly spread and thus there are fewer extreme values.

Give a formal definition and interpretation of a Moment Generating Function.

Let be a real-valued random variable. The Moment Generating Function (MGF) is:

defined for all real numbers in an open interval containing for which the expectation exists (is finite). The MGF generates moments of a distribution by differentiation:

E.g. mean value can be calculated from the MGF by first derivative. The MGF can be used to define the sum of two independent random variables as:

If the MGF exists in a neighborhood of , it uniquely determines the distribution of .

Example:

For X~Bern(p), the MGF is: .

Give a formal definition of joint CDF of two discrete random variables.

The joint CDf of two discrete random variables and is the function given by:

For variables the joint CDF is defined analogously.

Give a formal definition of joint PMF of two discrete random variables.

The joint PMF of two discrete random variables and is the function given by:

For variables the joint PMF is defined analogously. In order the function to be valid the following must be true:

What is the marginal PMF of two discrete random variables?

For two discrete random variables and the marginal PMF of is:

How is the conditional PMF of two discrete random variables defined?

For two discrete random variables and the conditional distribution PMF of given is

It is seen as a function of for fixed .

How is the joint PDF of two continuous random variables defined?

If two random variables and are continuous with joint CDF , their joint PDF is the derivative of the joint CDF with respect to and :

In order the joint PDF to be valid it is required it to be positive and integrate to 1.

How is the marginal PDF of two continuous random variables defined?

For two continuous random variables and with joint PDF , the marginal PDF of X is:

How is the conditional PDF of two continuous random variables defined?

For two continuous variables and with joint PDF the conditional PDF of given is

for all with .

How is the 2D LOTUS defined?

Let be a function from to . If and are discrete then the expectation of this function is:

For continuous case the expectation of the function is defined as follows:

Give a formal definition of the Covariance between two random variables.

The covariance between two random variables and is:

The two variables with zero covariance are independent and thus uncorrelated.

Emlist the main properties of Covariance.

- .

- .

- , for any constant

- , for any constant .

- .

- .

- . For : .

How is Correlation between two random variables defined?

The correlation between two random variables and is defined as:

Correlation is defined between and .

Give a formal definition of Multinomial distribution.

Let be the number of independent trials and let

be a probability vector, such that

A random vector

is said to have Multinomial distribution with parameters and , written

if is a number of outcomes of category in independent trials, where each trial results in one of the categories with probabilities .

How is the joint PMF of Multinomial distribution is defined?

For non-negative integers , such that:

the joint PMF is

Define multinomial conditioning.

If then

where .

Define Covariance for Multinomial distribution.

Let , then for , .

Give a formal definition of the Multivariate Normal distribution.

A vector of rundom variables is said to have multivariate normal distribution if every linear combination of its components has a Normal distribution.

How is the joint PDF of the Multivariate Normal distribution defined?

Let be an n-dimensional rundom vector with mean vector and covariance matrix , then is said to have Multivariate Normal distribution if its joint PDF is defined as:

where:

- is the determinant of the covariance matrix

- is symmetric and positive definite.

How is the joint Moment Generating Function defined?

Let be an n-dimensional rundom vector. The joint moment generating function of is the function:

if the expectation exists in a neighborhood of .

- Here .

Give a formal definition of the Beta Distribution.

Let be a continuous random variable defined on the interval . It is that has a Beta bistribution with parameters and , written

if its PDF is defined as:

where is the Beta function, defined as

Give a short interpretation of Beta Distribution.

- Shape flexibility:

- Beta distribution is bounded between 0 and 1

- Its shape depends on and values:

- -

- , - bell-shaped

- , - U-shaped

- , - skewed right

- , - skewed left

- Probability Interpretation: Often used to model random proportions, e.g., fraction of successes in Bayesian inference.

Give definitions for mean, variance and mode values of the Beta distribution.

- Mean:

- Variance:

- Mode (if ):

Give some examples of usage of Beta distribution.

- Bayesian posterior for a Bernoulli parameter p (Beta prior).

- Modeling proportions, probabilities, or rates between 0 and 1.

- Random variables constrained in a finite interval.

Give a formal definition of the Gamma Distribution.

Let be a continuous random variable with support . One says has a Gamma distribution with shape parameter and rate parameter , written

if its PDF is defined as

where is the Gamma function defined as

Give a short interpretation of the Gamma distribution.

Waiting time:

If events occur according to a Poisson process with rate , then is the time until -th event and has Gamma distribution .Sum of exponential variables:

If are independent, identically distributed variables and , then

Give definitions for mean, variance and mode values of the Gamma distribution.

- Mean:

- Variance:

- Mode (if ):

How are Beta and Gamma distributions connected?

The Beta distribution can be constructed from Gamma distributions. Let and be two independent random variables with the same rate . Define

Then:

and

Moreover, is independent of . And the normalization constant of the Beta distribution can be defined as

Give a formal definition of a Conditional Expectation given an event.

Let be an event with non-zero probability. If is a random variable then conditional Expectation in a discrete case is defined as

And for continuous case

where the conditional PDF is defined as follows:

How is the Low of Total Expectation defined?

Let be partition of events with non-zero probability from a sample space. Let be a random variable with support in this sample space. Then

is a total expectation of variable .

Give a definition of Conditional Expectation given a random variable.

If we have a function , then the conditional expectation of given , denoted is defined as a random variable . It means if takes the value of then becomes .

Enlist the properties of Conditional Expectation.

- If and are independent, then .

- For any function , .

- Linearity: and for any constant .

- Adam's low: .

- The random variable is uncorrelated with for any function .

Define the Conditional Variance.

The conditional variance of given is

which is equivalent to

Give a definition of the law of the total variance (Eve's law).

The law of the total variance is defined by the following formula:

Give a definition of Couchy-Schwarz inequality for marginal bound on a joint expectation.

For any random variables and with finite variances the following inequality is true:

Give a definition for Jensen's inequality for convexity.

Let be a random variable with existing finite expectation value. If a function is convex, then

Equality holds here only if:

- all are equal, or

- is linear on a convex hull of

Give a definition for Markov's bound on tail probabilities.

For any random variable and a constant ,

If frequently takes large values (i.e. large tail probability), then its expected value must also be large.

Give a definition for Chebyshev's bound on tail probabilities.

Let a random variable have mean and variance , then for any positive non-zero constant

Give a definition for Chernoff's bound on tail probabilities.

For any variable and constants and ,

Give a formal definition of the Weak Law of Large Numbers.

Let be independent and identically distributed random variables with sample mean and sample variance . Then for all

Give a formal definition of the Strong Law of Large Numbers.

Let be independent and identically distributed random variables with sample mean and sample variance . Then for sample mean

Give a formal definition of the Central Limit Theorem.

Let be independent and identically distributed random variables with sample mean and sample variance . Then with

where is the Standard Normal distribution.

Give a formal definition of the Chi-Square distribution.

Let be independent standard normal random variables, i.e. . The random variable

is said to have a Chi-Square distribution with degrees of freedom, denoted as . For it has the following PDF:

and for the function is zero. The Chi-square distribution is a special case of the Gamma distribution .

Give a formal definition of the Student-t distribution.

Let be a standard normal random variable, a chi-square distributed random variable with degrees of freedom and both are independent. Then the random variable

is said to follow a Student-t distribution with degrees of freedom, denoted by

The PDF of Student-t distribution for is defined as

The key properties of the Student-t distribution are as follows:

- Support: .

- Symmetry: symmetric about 0.

- Mean: for .

- Variance: for and for .

- Heavy tails: heavier tails than the normal distribution.

- Limit: as .

Give a formal definition of a Markov chain.

A sequence of random variables taking values from a state space is called Markov chain, if for all positive non-zero values of the following is true:

The quantity is called transition probability from the state to .

Give a definition of the Transition Martrix of a Markov Chain.

Let be a Markov chain with a state space and let be the transition probability from the state to . The matrix is called the transition matrix of the chain.

Give a formal defintion of -step transition probability.

The -step transition probability from the state to is the probability of being in the state after exactly transitions after being at . It is denoted by :

Note that

since the transitions and are independent due to Markov chain property.

What are recurrent and transient states in Markov chains?

State of a Markov chain is recurrent if starting from the probability of the chain returning to the state again is 1. Otherwise the state is transient, which means there is a positive probability of chain never returning to that state. Number of returns to a transient state has a Geometric distribution.

How are irreducible and reducible Markov chains defined?

A Markov chain with a transition matrix called irreducible if for any two states and there is a positive probability of getting from state to in a finitie number of steps. It means that for any two states and the entry of the transition matrix is positive. The Markov chain that is not irreducible is called reducible. In an irreducible Markov chain with finite state space all states are recurrent.

How is a period of a state in a Markov chain defined?

The period of a state i is defined as a great common divisor of all possible return times back to the state . If the period of a state is 1 the state is called aperiodic, which happens for states with self loops. In an irreducible Markov chain all states have the same period.

What is stationary distribution of a Markov chain?

Consider a Markov chain with finite or countable state space and transition matrix . A probability distribution is called stationary if

for all , with normalization condition

The main properties of the stationary distribution are:

- Stationary distribution is marginal, not conditional.

- For any irreducible Markov chain, there exists a unique stationary distribution.

- For an irreducible, aperiodic Markov chain with stationary distribution the transition matrix converges with to a matrix where each row is .

- For an irreducible Markov chain with stationary distribution , the value of is a reciprocal of an expected time to return to the state .

What is reversibility of a Markov chain.

Let be the transition matrix of a Markov chain. Let there be with and such that

for all and , then this equation is called reversibility condition and the Markov chain is called reversible. If the condition satisfies, then is the stationary distribution. If each column of the transition matrix sums to 1, then the Uniform distribution over all states is a stationary distribution of this Markov chain.

What problem does a Markov Chain Monte-Carlo Simulation solve?

Suppose you want to sample from a target distribution .

Example situations:

- A complicated posterior distribution in Bayesian inference.

- A high-dimensional distribution where normalization is unknown.

Often the distribution can only be computed up to a constant:

If direct sampling is hard a Monte-Carlo simulation can create a Markov's chain whose long-run distribution equals . Some MCMC algorithms are Metropolis-Hastings and Gibbs sampling.

Explain Metropolis-Hastings MCMC algorithm.

The core idea of the Metropolis-Hastings MCMC algorithm is:

- Start from a current state

- Propose a new candidate state

- Accept or reject the candidate based on a probability rule.

Over time, the chain produces samples approximating the target distribution.

The algorithm steps are as follows:

- Choose a starting point .

- Draw a new candidate state from a proposed distribution :

Common examples of proposed distribution:

- Normal

- Uniform around the current value

- Compute acceptance probability:

- Sample from a and if the result is success accept the proposed state else stay in the actual state .

- Repeat many times.

After a burn-in period, the samples approximate the target distribution. The core strength of this method is that you do not need to know the normalization constant of the target distribution in order to conduct the simulation.

Explain the Gibbs sampling MCMC algorithm.

The Gibbs sampling algorithm is a special case of MCMC methods used to generate samples from a joint probability distribution when direct sampling is difficult but sampling from conditional distributions is easy. It is closely related to the Metropolis–Hastings algorithm, but it simplifies the acceptance step by always accepting proposals.

Suppose you want samples from a joint distribution:

Direct sampling might be hard, but you can sample from each conditional distribution:

Gibbs sampling generates samples by cycling through variables and sampling each one conditioned on the others.

The algorithm steps are as follows:

- Choose a starting point:

- Make sequential conditional sampling at iteration :

After one full cycle you obtain the next sample vector.

Give a definition of Poisson processes in one dimension.

A Poisson process in one dimension is a stochastic process that models random points occurring along a line (usually time) where events happen independently and at a constant average rate . The number of arrivals in a constant time has distribution. The numbers of arrivals in disjoint time intervals are independent.

Explain the conditioning property of one-dimensional Poisson processes.

Let be a Poisson process with rate . Suppose we condition on:

meaning exactly events occured in the time interval . Now take a smaller interval with .

Then:

In a Poisson process with the rate conditional on the joint distribution of the arival times is the same as the joint distribution of the order statistics of independent and equally distributed with random variables.

Explain the superposition property of one-dimensional Poisson processes.

Suppose we have independent Poisson processes:

with rates:

If we define a new process by adding the event counts:

then the rate of this process is the following sum:

Think of events coming from multiple independent sources:

phone calls from different departments

photons from different emitters

customers arriving through different doors

If each source produces events randomly at rate , then the combined stream of events is still random with rate equal to the sum of the rates.

Explain the thinning property of one-dimensional Poisson processes.

Let be a Poisson process with rate . Suppose that each event is independently kept with probability and removed with probability . Define a new counting process as a number of kept events. Then is itself a Poisson process with rate . Similarly, the removed events form another process with rate and the two processes and are independent Poisson processes.

Give a definition of two-dimensional Poisson processes.

Let denote the number of points in a region . A point process on the plane is a two-dimensional Poisson process with intensity if it satisfies:

Poisson distribution of counts:

For any bounded region ,where

- is the area of the region

- is the average number of points per unit area.

Independent counts in disjoint regions:

If are disjoint regions in the plane, thenare independent random variables.

Such properties of one-dimensional Poisson processes as conditioning, superposition and thinning are also valid for two-dimansional Poesson processes.

What is data reduction of a distribution in statistical inference?

In statistical inference, data reduction of a distribution refers to the process of summarizing the information contained in a full dataset in a smaller, simpler form that still retains all the relevant information for estimating the parameters of interest. Essentially, it’s about compressing the data without losing information needed for inference.

Let be a sample from a distribution with parameter and a function of the data. Data reduction is the process of replacing the full dataset with a statistic such that inference about can be based on instead of the full .

Example:

Let , then the statistic

contains all information about , so you can do inference using only the sum instead of all .

How is sufficient statistic defined?

A statistic containing all information about parameter is called sufficient if the conditional distribution of the sample given the value does not depend on . E.g. the summation statistic for a Poisson sample is sufficient, because it is a smallest summary of the sample containing all information about the parameter .

Explain what minimal sufficient statistic is.

In statistical inference, a minimal sufficient statistic is the most compressed statistic that still contains all the information about a parameter in the sample. It represents the maximum possible data reduction without losing any information relevant to the parameter.

Let be a sample from a distribution with parameter . A statistic is minimal sufficient for if it is sufficient, and for any other sufficient statistic , it can be written as a function of , e.g. sample mean and variance of a normal distribution.

Explain what ancillary statistic is.

An ancillary statistic is a statistic whose probability distribution does not depend on the unknown parameter of the model. So it contains no information about the parameter, even though it is computed from the data.

Let be a sample from a distribution with parameter .Let be a sample from a distribution with parameter . A statistic is ancillary if its distribution is independent of .

Formally:

does not depend on .

Example:

Suppose there are two random variables with normal distribution

Consider the statistic

If we standardize by variance

its distribution is and its independent of .

Explain what complete statistic is.

Let be a sample from a distribution with parameter . is complete if a function satisfies for all only if with probability 1. If the expected value of a function of is zero for every parameter value, then the function must be identically zero. There are no non-trivial unbiased functions of that vanish for all parameters. Completeness means that the statistic contains no hidden redundancy. If you try to construct a function of the statistic whose expected value is always zero, the only possibility is the trivial function. So the statistic is informationally tight: it does not allow cancellation of information across parameter values.

What is the likelihood function in statistical inference?

The likelihood function describes how plausible different parameter values are given the observed data. Suppose is a random sample from a distribution with parameter and probability density (or mass) function . After observing the data

the likelihood function is defined as

If the observations are independent, this becomes

- In probability theory: the parameter is fixed and data are random.

- In likelihood: the data are fixed, and the function of the parameter is viewed.

So the likelihood measures how well each parameter value explains the observed data.

Example:

Suppose

with known variance.

The density function is

The likelihood function is

This function tells us which values of make the observed data most likely.

Explain the principle of equivariance in statistical inference.

Let be a parameter and an estimator of based on a sample . Suppose we apply a transformation to the parameter, giving a new parameter

The principle of equivariance states that the estimator of the transformed parameter should be the same transformation applied to the estimator of . So if is an estimator of then the estimator of should be .

What is a point estimator in statistical inference?

A point estimator is a statistic used to estimate an unknown parameter of a population by a single numerical value based on sample data.

Let

be a random sample from a population with parameter . A point estimator of is a function of the sample observations:

The value obtained from the sample is called the point estimate.

Example:

If we want to estimate the population mean , the sample mean

is a point estimator of .

Explain method of moments (MOM) for point estimation.

This method equates sample moments with population moments.

Steps:

- Compute population moments in terms of parameters.

- Replace them with sample moments.

- Solve for the parameter.

Example:

If , then estimator is

Explain Maximum Likelihood point estimator (MLE).

This method chooses the parameter value that maximizes the likelihood function.

If the likelihood function is

then the estimator is the value that maximizes .

Example:

For a normal distribution

Explain Bayes point estimator.

In Bayesian inference, the parameter is treated as a random variable with a prior distribution . After observing data , we compute the posterior distribution:

where:

- is likelihood

- is prior distribution

- is posterior distribution

The Bayes estimator is the value that minimizes the expected posterior loss.

Let

- be a loss function

- be estimator decision

The Bayes estimator is

which means choose the estimate that minimizes the expected loss under the posterior distribution.

Different loss functions lead to different estimators.

- Squared Error Loss:

The Bayes estimator becomes the posterior mean:

- Absolute Error Loss:

The Bayes estimator becomes the posterior median.

- 0–1 Loss:

The Bayes estimator becomes the posterior mode, also called the MAP estimator (Maximum A Posteriori).

Explain principal difference between classical and Bayesian statistical inference.

The main difference between classical and Bayesian approaches in Statistical Inference concerns how the unknown parameter is treated.

In classical (frequentist) inference, the parameter is considered a fixed but unknown constant, and probability describes the randomness of the sample data. Inference is based only on the likelihood derived from the observed data.

In Bayesian Statistics, the parameter is treated as a random variable with a prior distribution representing prior knowledge. Using Bayes' Theorem, the prior is updated with the observed data to obtain the posterior distribution, which is then used for estimation and inference.

Enlist methods of evaluating the point estimators.

1. Classical Properties

- Unbiasedness - the estimators expected value equals the true parameter:

Which means there is no systematic over- or underestimation.

- Consistency – the estimator converges to the true parameter as sample size increases:

- Efficiency – among unbiased estimators, the one with smaller variance is more efficient. Often compared to the Cramér–Rao lower bound.

- Mean Squared Error (MSE) – combines bias and variance to measure error:

- Sufficiency – an estimator based on a sufficient statistic uses all information in the sample about the parameter.

- Robustness – the estimator’s performance is stable under deviations from model assumptions or in presence of outliers.

2. Loss-Function and Risk-Based Evaluation

- Loss function measures the penalty for estimating by .

Examples:- Squared error:

- Absolute error:

- 0–1 loss: 0 if correct, 1 if incorrect

- Risk function evaluates the expected loss:

- Optimality: an estimator is optimal if it minimizes the risk for the chosen loss function:

Example (Bayesian context):

- Squared error → posterior mean

- Absolute error → posterior median

- 0–1 loss → posterior mode (MAP)

Give a definition of what is hypothesis and its testing in inference statistics.

In Statistical Inference, a hypothesis is a statement or assumption about a population parameter that we want to evaluate based on sample data.

- Null hypothesis (): the default assumption, usually representing no effect or no difference.

- Alternative hypothesis (): represents the claim we want to test, usually indicating an effect or difference.

Hypothesis testing is the procedure of using sample data to decide whether to reject in favor of or not. It involves:

- Formulating hypotheses and .

- Selecting a test statistic that summarizes the evidence from the data.

- Determining the sampling distribution of the test statistic under .

- Computing the p-value or critical region to assess evidence against .

- Making a decision:

- Reject if evidence is strong.

- Fail to reject if evidence is insufficient.

Hypothesis testing does not prove true, it only evaluates whether data provide enough evidence to reject . Decisions are made with a predefined significance level (), controlling the probability of rejecting true .

Explain Critical Region / Rejection Region Method for Hypothesis testing.

In the frequentist framework, the Critical Region (or Rejection Region) Method is a way to perform hypothesis testing by defining a region of the sample space where, if the observed value of the test statistic falls inside, we reject the null hypothesis .

- The test statistic summarizes the sample data in a way that is sensitive to deviations from

- The critical region is determined using a significance level , which is the maximum probability of rejecting true .

Steps:

- Formulate and .

- Choose a test statistic suitable for the hypothesis and data type.

- Determine the sampling distribution of the statistic under .

- Define the critical value(s) based on the significance level .

- Compare the observed statistic to the critical value:

- If inside the critical region - reject .

- If outside - fail to reject .

Most common test statistics are:

- Z-test (Population Mean, known).

- t-test (Population Mean, unknown).

- Chi-Square Test (Variance or Goodness-of-Fit).

- F-test (Comparing Two Variances).

- Likelihood Ratio Test (General Parametric Models).

- Non-Parametric / Rank-Based Tests.

Explain the Z-test statistic for hypothesis testing.

A Z-test is a parametric hypothesis test used to determine whether a sample mean is significantly different from a hypothesized population mean. It is based on the standard normal distribution (), when the population variance is known or when the sample size is large () due to the Central Limit Theorem.

One-sample Z-test statistic:

Where:

- - sample mean.

- - hypothesized population mean.

- - population standard deviation (known).

- - sample size. Two-sample Z-test compares the means of two independent populations with known variances:

When to Use a Z-Test:

- Population variance is known.

- The sample is large.

- Data are continuous and approximately normally distributed (small samples).

- Testing means (one-sample or two-sample).

Example:

A factory claims that its light bulbs last hours on average. A quality control engineer takes a sample of bulbs and finds:

- Sample mean: hours.

- Known population standard deviation: hours. Test at significance level () whether the mean lifetime is less than hours.

Step 1: Define Hypotheses

- Null hyposethis: .

- Alternative hypothesis: .

Step 2: Choose Test Statistic

- One-sample Z-test:

Step 3: Compute the Test Statistic

Step 4: Determine Critical Value / Rejection Region

- Significance level: (one-tailed, left).

- Critical Z-value: (, the value of is taken from the normal distribution).

- Rejection region: .

Step 5: Make a Decision

- Observed falls in the rejection region.

- Decision: Reject .

Step 6: Conclusion

At 5% significance level, there is sufficient evidence to conclude that the mean lifetime of the bulbs is less than 1200 hours.

Explain the t-test statistic for hypothesis testing.

A t-test is a statistical test used to determine whether a sample mean is significantly different from a hypothesized population mean when the population standard deviation is unknown.

- It uses the Student’s t-distribution, which accounts for extra variability due to estimating from the sample.

- The shape of the t-distribution depends on the degrees of freedom (df = n – 1).

- As the sample size increases, the t-distribution approaches the standard normal distribution.

One-sample t-test statistic:

where:

- - sample mean.

- - hypothesized population mean.

- - sample standard deviation.

- - sample size.

Two-sample t-test (independent samples):

When to Use a t-Test:

- Population variance is unknown.

- The sample is small.

- Data are approximately normally distributed.

- Testing means (one-sample or two-sample).

Example: A nutritionist claims that the average sodium content in a certain brand of soup is 500 mg per serving. A random sample of 15 soups has:

- Sample mean: mg

- Sample standard deviation: mg Test at 5% significance level () whether the average sodium content differs from 500 mg.

Step 1: State Hypotheses

- Null hyposethis: mg

- Alternative hypothesis: mg

Step 2: Choose Test Statistic

- One-sample t-test is appropriate since is unknown and .

Step 3: Compute the Test Statistic

Degrees of freedom:

Step 4: Determine Critical Value / Rejection Region

- Two-tailed test at , which means splitting in each tail.

- From t-table:

- Rejection region:

Step 5: Make a Decision

- Observed falls in the rejection region.

- Decision: reject .

Step 6: Conclusion

At 5% significance level, there is sufficient evidence that the average sodium content differs from 500 mg.

Explain the Chi-Square test statistic for hypothesis testing.

A Chi-Square test ( test) is a non-negative test statistic used to evaluate hypotheses about:

- Variances of a normally distributed population.

- Goodness-of-fit: whether observed frequencies match expected frequencies.

- Independence or association in contingency tables.

The test statistic follows a Chi-Square distribution with degrees of freedom depending on the test type.

When to Use a Chi-Square Test

- Population variance test:

- You want to test : for a normal population.

- Goodness-of-fit test:

- Data are categorical, and you want to compare observed versus expected frequencies.

- Test of independence / association:

- You have a contingency table (e.g., row × column categories) and want to see if variables are independent.

- Assumptions:

- Data are independent observations

- For variance tests: population is normal

- For categorical tests: expected frequency per category ideally ≥ 5

1. Chi-Square Test for Population Variance.

Test Statistic:

Where:

- - sample size

- - sample variance

- - hypothesized population variance

- degrees of freedom:

Decision: reject if the statistic falls outside the critical values from table.

Example: Variance test

A machine claims to fill bottles with variance in volume of . A sample of bottles gives sample variance . Test at 5% significance level if variance is different.

Step 1: Hypotheses

Step 2: Test Statistic

- degrees of freedom:

Step 3: Critical Values (two-tailed, )

- Left:

- Right:

- Rejection region: , or

Step 4: Decision

- Observed does not fall in the rejection region.

- Decision: fail to reject

Conclusion: No sufficient evidence to say variance differs from 4 .

2. Chi-Square Test for Goodness-of-Fit

Test Statistic:

Where:

- - observed frequency for category

- - expected frequency for category

- - number of categories.

Degrees of freedom: , where is the number of estimated parameters of the samples distribution.

Example:

A die is rolled 60 times, yielding counts:

| Face | 1 | 2 | 3 | 4 | 5 | 6 |

|---|---|---|---|---|---|---|

| Observed | 8 | 10 | 9 | 11 | 12 | 10 |

Test at if die is fair.

Step 1: Hypotheses

- die is fair, - die is not fair.

Expected frequency: for all faces.

Step 2: Test Statistic

Step 3: Degrees of Freedom

- ( because all faces are equally likely and there is no parameters to estimate).

- Critical value (, two-tailed is not needed for chi-squared, thus right tail test ):

Step 4: Decision

- Observed , thus fail to reject .

Conclusion: no evidance to suggest that the die is unfair.

3. Chi-Square Test for Independence (Contingency Table)

Test Statistic:

- degrees of freedom: df = (number of rows – 1) × (number of columns – 1)

- Decision: Reject if

The Chi-Square Test for Independence is used in Statistical Inference to determine whether two categorical variables are statistically independent or whether there is an association between them.

It is based on comparing:

- Observed frequencies (actual counts in the data)

- Expected frequencies (counts we would expect if the variables were independent)

If the observed counts differ greatly from the expected counts, the variables are likely not independent.

Example:

Suppose we want to test whether gender is independent of preference for a product.

A survey of 100 people gives:

| like product | dislike product | total | |

|---|---|---|---|

| Male | 30 | 20 | 50 |

| Female | 10 | 40 | 50 |

| Total | 40 | 60 | 100 |

Step 1: Hypotheses

: Gender and preference are independent.

: They are dependent

Step 2: Compute Expected Frequencies:

Using

Male like:

Male dislike:

Female like:

Female dislike:

Expected table:

| like | dislike | |

|---|---|---|

| Male | 20 | 30 |

| Female | 20 | 30 |

Step 3: Compute statistic

Step 4: Degrees of Freedom

Step 5: Critical Value

At

Step 6: Decision

Therefore we reject the null hypethesis.

Conclusion

There is significant evidence that gender and product preference are associated (not independent).

Explain the F-test statistic for comparing two variances.

The F-test is used in Statistical Inference to determine whether two populations have the same variance. It compares the variability of two independent samples. The test statistic follows the F-distribution, introduced by Ronald A. Fisher, which is the distribution of the ratio of two independent scaled chi-square random variables.

The F-test answers questions such as:

- Do two production machines produce items with the same variability?

- Are two measurement methods equally precise?

- Do two populations have equal variances?

This test is also the basis for analysis of variance (ANOVA).

Hypotheses:

For two populations:

where and are population variances.

Test Statistic

The F statistic is

where

- - sample variance of sample 1.

- - sample variance of sample 2.

Usually the larger variance is placed in the numerator so that .

Distribution

Under :

where

- and are sample sizes

- degrees of freedom:

Assumptions

The F-test requires:

- Independent samples

- Normally distributed populations

- Random sampling

Example:

Suppose two machines produce metal rods, and we want to check if their variability in length is the same.

Samples:

| Machine | Sample size | Sample variance |

|---|---|---|

| A | 10 | 25 |

| B | 12 | 10 |

Step 1: Hypotheses

Step 2: Test Statistic

Place the larger variance in the numerator:

Step 3: Degrees of Freedom

So

Step 4: Critical Value

At significance level (right tail):

Step 5: Decision

Therefore do not reject the null hypothesis.

Conclusion

There is no sufficient evidence that the two machines have different variances.

Explain Likelihood Ratio Test (LRT) for General Parametric Models.

The Likelihood Ratio Test (LRT) is a general method in Statistical Inference for testing hypotheses about parameters in parametric statistical models.

The main idea is to compare:

- the maximum likelihood under the null hypothesis

- the maximum likelihood without restrictions

If the unrestricted model explains the data much better, the null hypothesis is rejected.

Let

- be likelihood function of parameter

- - the full parameter space

- - parameter values allowed under

The likelihood ratio statistic is

Where:

- numerator = maximum likelihood assuming

- denominator = maximum likelihood in the full model

Thus

Small values of indicate evidence against .

Test Statistic

Usually we use

A fundamental result (Wilks’ theorem) states that, for large samples:

where is the difference in number of parameters between models.

Decision Rule

Reject if

Example:

Suppose we observe data from a normal distribution:

Assume variance is known and is equal 1.

We want to test

Sample data:

Step 1: Likelihood Function

For normal data with variance 1:

The maximum likelihood estimator is

Step 2: Maximum Likelihoods

Under the full model:

Under the null hypothesis:

Step 3: Compute Statistic

Step 4: Distribution

Number of restricted parameters:

So

Critical value at :

Step 5: Decision

Therefore reject

Conclusion

There is significant evidence that the mean is different from 0.

Explain Non-Parametric / Rank-Based Tests.

Non-parametric (rank-based) tests are hypothesis tests that do not assume a specific parametric distribution for the population (e.g., normal distribution). Instead of relying on the raw values, they typically use ranks of the data.

They are especially useful when:

The distribution is unknown or not normal

Sample sizes are small

The data are ordinal (ranked) rather than numerical

The data contain outliers that would distort parametric tests

Non-parametric tests are often considered distribution-free alternatives to parametric tests such as the Z-test, t-test, or ANOVA.

Instead of using the raw data values , we:

- Sort the observaions

- Replace values with ranks

Example:

| Value | Rank |

|---|---|

| 3 | 1 |

| 5 | 2 |

| 8 | 3 |

| 10 | 4 |

Statistical tests are then performed on ranks rather than values. This makes the tests robust to distributional assumptions.

Explain p-value method for Hypotheses testing.

The p-value method is a common procedure in Statistical Inference for deciding whether to reject or not reject a null hypothesis based on observed data. Instead of comparing the test statistic directly with a critical value, we compute the probability of observing a result at least as extreme as the one obtained, assuming the null hypothesis is true.

The -value is:

observing a test statistic as extreme or more extreme than the observed one is true .

Thus it measures how incompatible the data are with the null hypothesis.

- Small -value: strong evidence against .

- Large -value: data are consistent with .

Let be the significance level (common 0.05).

Then the decision rule:

- If - reject .

- If - do not reject .

Example: Z-Test

Suppose a company claims the average battery life is 50 hours.

Sample data:

- sample mean

- population standard deviation

- sample size .

Test:

Significance level:

Step 1: Compute Test Statistic

Step 2: Compute p-Value

For a two-sided test:

From the standard normal distribution:

So

Step 3: Decision

Therefore reject .

What are Union Intersection and Intersection Union tests?

In Statistical Inference, Union–Intersection (UI) and Intersection–Union (IU) tests are general frameworks used when hypotheses involve multiple parameters or multiple conditions. They describe how a global hypothesis test can be constructed from several simpler tests.

1. Union–Intersection Test (UIT)Idea:

The null hypothesis is an intersection of several conditions, while the alternative is their union.

Interpretation:

- : all conditions hold simultaniously.

- : at least one condition is violated.

Decision Rule

Reject if any of the individual tests rejects its corresponding

2. Intersection–Union Test (IUT)

Idea:

Here the hypotheses are reversed. The null hypothesis is a union, and the alternative is an intersection.

Interpretation:

- : at least one condition holds.

- : all conditions must hold simultaniously.

Decision Rule

Reject only if all individual tests reject.

Explain Analysis of Variance (ANOVA) method.

ANOVA (Analysis of Variance) is a statistical method used to test whether the means of several populations are equal. It extends the idea of the two-sample t-test to more than two groups. ANOVA tests whether differences among sample means are statistically significant or simply due to random variation. Typical question is if several groups have the same population mean. It can be interpreted as a Union–Intersection Test (UIT).

Example applications:

- Comparing effectiveness of different drugs

- Comparing teaching methods

- Comparing production processes

Hypotheses:

Suppose we have groups with means:

Hypotheses:

:

: at least one differs

ANOVA analyzes two sources of variability in the data:

- Between-group variability: variation between the group means

- Within-group variability: variation inside each group

If group means are truly equal, these two types of variation should be similar.

If the between-group variation is much larger, the means are likely different.

Let

- be the observation in group .

- - mean of group .

- - overall mean.

Toatl variability:

This splits into:

where

Between-group variation

Within group variation

We divide sums of squares by their degrees of freedom.

Between groups:

Within groups:

where

- k - number of groups

- N - total number of observations

The ANOVA statistic is represented by F-statistic:

Under the null hypothesis:

A large F value suggests the group means are different.

Explain Bayesian method for hypothesis testing.

In Bayesian hypothesis testing, hypotheses are treated as probabilistic models, and inference is based on updating beliefs using Bayes’ theorem. This approach belongs to Bayesian Statistics. Unlike classical testing, where hypotheses are rejected or not rejected, Bayesian methods evaluate how probable each hypothesis is after observing the data.

Suppose we have two competing hypotheses:

Bayesian analysis assigns prior probabilities to the hypotheses:

After observing data , we compute posterior probabilities:

using Bayes’ theorem:

where:

- likelihood under .

The central tool in Bayesian hypothesis testing is the Bayes factor.

It measures how much the data support one hypothesis over the other.

Bayesian testing often compares posterior odds:

Example: Suppose we test whether a coin is fair.

Hypothesises:

We flip the coin 10 times and observe 8 heads.

Likelihood under

Likelihood under For we assume a prior distribution for .

For example:

Then the likelihood is obtained by integrating over all possible :

Bayes Factor

Since

the data favor (the coin may not be fair).

Decision rule:

Instead of fixed rejection regions, Bayesian testing uses:

- Posterior probability comparison

or

- Bayes factor thresholds

Example rule:

Reject if:

Enlist and explain main methods of evaluation of hypothesis tests.

1. Type I Error Probability (Significance Level)

A Type I error occurs when we reject the null hypothesis even though it is true.

- is called significance level

- common values are between 0.01 and 0.05

A good test should control the probability of Type I error.

2. Type II Error Probability

A Type II error occurs when we fail to reject even though it is false.

A good test should minimize this probability.

3. Power of the Test

The power of a test is the probability of correctly rejecting the null hypothesis when it is false.

Thus

A test with higher power is better because it detects real effects more reliably.

4. Power Function

The power function describes the probability of rejecting for every possible parameter value.

Properties:

- Under :

- Under : should be as large as possible

5. Most Powerful Test

A test is most powerful if it has the largest power among all tests with the same significance level .

6. Uniformly Most Powerful Test (UMP)

A test is uniformly most powerful if it has the highest power for all parameter values in the alternative hypothesis.

7. Consistency of a Test

A test is consistent if its power approaches 1 as the sample size increases when the alternative hypothesis is true.

Thus with large samples, the test will almost surely detect the false null hypothesis.

What are the interval estimators in statistical inference?

Interval estimators (confidence intervals) provide a range of plausible values for an unknown parameter rather than a single estimate.

Several methods exist for constructing interval estimators.

- Pivot (Pivotal Quantity) Method.

- Test Inversion Method.

- Likelihood-Based Method.

- Asymptotic (Normal Approximation) Method.

- Bayesian Credible Intervals.

- Bootstrap Method.

Explain and give a simple example for Pivot (Pivotal Quantity) Method.

The Pivot Method is one of the classical approaches to constructing confidence intervals in Statistical Inference. It is based on a pivotal quantity, which is a function of the sample and the parameter whose probability distribution does not depend on the unknown parameter.

Definition of a Pivot

A pivotal quantity satisfies:

This allows us to use known distributions to find intervals for .

Steps to Construct an Interval Using a Pivot

- Identify a pivotal qunatity .

- Find its distribution, usually standard (Normal, t, , etc).

- Solve the probability statement for :

to get the confidence interval.

Example:

Suppose we have:

where is a known value.

We want a 95% confidence interval for .

Step 1: Identify pivot:

The natural pivot is the standardized sample mean:

- is standadrd normal.

- its distrubution does not depend on .

Step 2: Use Probability Statement

For 95% confidence:

where .

Step 3: Solve for

Multiply both sides by and solve:

This is the 95% confidence interval for .

Explain Test Inversion method for finding confidence intervals.

The Test Inversion Method is a classical approach in Statistical Inference for constructing confidence intervals by inverting a family of hypothesis tests. Instead of directly finding a formula for the interval, you identify all parameter values that would not be rejected by a suitable hypothesis test.

Idea:

- Consider a parameter .

- For each candidate value perform a hypothesis test:

- Collect all for which was not rejected.

This set of forms the confidence interval.

Steps:

- Choose a test statistic fro testing .

- Determine the rejection region based on the chosen significance level .

- Invert the test: find all such that does not fall in the rejection region.

- The resulting set of values is the confidence interval.

Explain Likelihood-Based Method for Interval Estimation.

The Likelihood-Based Method constructs confidence intervals using the likelihood function of the parameter. It is widely used in Statistical Inference and is closely related to maximum likelihood estimation (MLE) and likelihood ratio tests.

Parameter values that produce likelihoods close to the maximum likelihood are considered plausible values for the parameter.

To construct an interval, we compare the likelihood at with the maximum likelihood.

Likelihood ratio:

Values of for which this ratio is not too small are included in the confidence interval.

A common form for likelihood ratio confidence interval is

where

- is the log likelihood.

- is the MLE.

- is the chi-square critical value with 1 degree of freedom.

This set of values forms the confidence interval.

Explain Asymptotic (Normal Approximation) Method for Interval Estimation.

The Asymptotic Method constructs confidence intervals using the fact that many estimators become approximately normally distributed for large sample sizes. This method is widely used in Statistical Inference. The word asymptotic means that the result becomes accurate as the sample size becomes large.

Key Idea:

For many estimators :

More precisely:

This allows us to construct a confidence interval using the standard normal distribution.

General formula for the interval:

Using the standard normal quantile :

where

- is estimator

- - variance of the estimator

If the variance is unknown, we replace it with an estimate.

Explain Bootstrap Method for Interval Estimation.

The Bootstrap Method is a computational technique used to estimate confidence intervals when the sampling distribution of an estimator is difficult to derive analytically. It is widely used in Statistical Inference. The idea is to approximate the sampling distribution of an estimator by resampling from the observed data.

Basic Idea:

Normally, to understand the distribution of an estimator , we would repeatedly sample from the population. In practice, we only have one sample. Bootstrap solves this by treating the observed sample as an approximation of the population and repeatedly sampling from it. Thus we simulate many new datasets from the original data.

Bootstrap procedure:

Suppose we have a sample:

and an estimator .

- Draw a bootstrap sample of size from the original data with replacement.

- Compute the estimator for this sample